Recently a friend of mine asked me what projection would be the best to create point-buffer circles. All map projections adds distortion so you rarely end up with a circle. If you’re lucky, you’ll have an ellipse but often they get even more complex than this. In many cases a “normal” circle is sufficient for accuracy, but still it got me thinking how I would do this 100% accurate. There are many approaches to this, fx. creating a new projection for each point, do the math in spherical coordinate systems etc, but none of them are completely trivial.

The UTM projection is one of the most commonly used projections. It’s fairly accurate to measure from point to point within small distances and close to the meridian, so this post is based on that. Most of the math here can also be applies to Mercator, where the scale reductions are applied to Y instead of X.

The Mercator projection is created by projecting the globe onto a cylinder whose center follows the Earth’s rotation axis. The Transverse Mercator is basically a "tilted" cylinder parallel to equator. Along the line that the cylinder "touches" the surface the distortion is 0, and increasing away from it in the east/west direction. There is no distortion in the North/South direction. The Universal Transverse Mercator is slightly smaller than the globe, so it will intersect the surface two places. This also ensures that the distortion between and around these two lines are minimal. Along the meridian (the center of the two lines) the distortion for the UTM projection is 0.9996, meaning that this is the amount you have to reduce an infinitely small line in the east/west direction (For Mercator this is normally 1 along Equator). As you move away from the meridian, the scale factor increases up toward infinity. Normally UTM projections are used close to the meridian, and every time you get too far east/west, you would switch to use a new UTM projection. That’s why The Earth is divided into 60 UTM ‘zones’, one for each 6 degrees. Often for practical reasons you might want to use a UTM zone even though it’s outside its defined usage area. Here you have to be specifically careful when measuring distances. For example, Denmark is covered by zone 32 and 33, but it’s common to only use zone 32 which means that distance calculations at the east end can be very inaccurate without applying a scale reduction.

The Mercator projection is created by projecting the globe onto a cylinder whose center follows the Earth’s rotation axis. The Transverse Mercator is basically a "tilted" cylinder parallel to equator. Along the line that the cylinder "touches" the surface the distortion is 0, and increasing away from it in the east/west direction. There is no distortion in the North/South direction. The Universal Transverse Mercator is slightly smaller than the globe, so it will intersect the surface two places. This also ensures that the distortion between and around these two lines are minimal. Along the meridian (the center of the two lines) the distortion for the UTM projection is 0.9996, meaning that this is the amount you have to reduce an infinitely small line in the east/west direction (For Mercator this is normally 1 along Equator). As you move away from the meridian, the scale factor increases up toward infinity. Normally UTM projections are used close to the meridian, and every time you get too far east/west, you would switch to use a new UTM projection. That’s why The Earth is divided into 60 UTM ‘zones’, one for each 6 degrees. Often for practical reasons you might want to use a UTM zone even though it’s outside its defined usage area. Here you have to be specifically careful when measuring distances. For example, Denmark is covered by zone 32 and 33, but it’s common to only use zone 32 which means that distance calculations at the east end can be very inaccurate without applying a scale reduction.

Warning: The following contains a lot of math. You can skip to the bottom and download a C# class that does all the calculations for you.

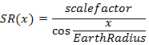

The scale factor SR(x) for any given point at distance 'x' from the meridian is given by:

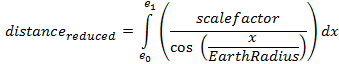

Since this is the scale reduction at a point, we need to sum up all of these values along a line to find the correct distance, thus the east/west distance from e1 to e0 (expressed in distance from the meridian) becomes an integral expression:

To this you need to add the north/south distance using the well-known Euclidean distance formula.

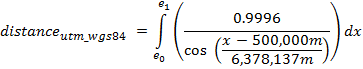

For atypical UTM using WGS84 ellipsoid and a 500000m false easting it becomes:

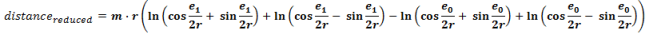

If you haven’t refreshed your integral math lately, or just want to write this in pure code, one can also write the expression as:

Where m is the scalefactor at the meridian, r is the earth radius and e1 and e0 have the false easting subtracted.

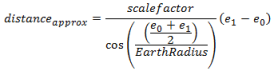

Often we can do with an approximate value by using the scale reduction between the start and end point. This should only be used on distances <1km in easting because it gets very inaccurate for greater distances.

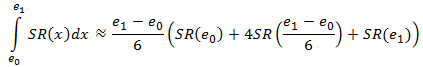

Simpson’s Rule

One can also use Simpson’s rule for calculating the integral solution. My tests showed it’s a very accurate approximation for this type of integral usually giving millimeter accuracy. It’s defined as:

I performed a few performance tests for 10 million calculations using all four methods for finding a distance in a UTM projection. Below is the processing time for each method:

| Euclidean |

4.38 s |

| Average / Linear |

4.49 s |

| Simpsons |

6.94 s |

| Integral |

9.26 s |

Bottom line of this: If you want performance and distance is small use the averaging method, if you want accuracy, go with the Integral method, or if you want both, use the Simpsons approach.

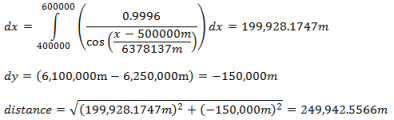

Distance calculation - example

start point : { E=400,000 ; N=6,100,000 }

end point : { E=600,000 ; N=6,250,000 }

- Distance using Simpsons rule: 249,942.5569m (error: 0.0003m)

- Distance with approximate scale reduction: 249936.0046m (error: 6.54m)

- Distance without scale reduction: 250,000m (error=57.46m)

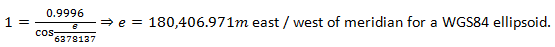

Where is scale reduction=1?

Solving SR(x) =1 gives us the distance from the meridian where the scale reduction is exactly 1:

Area calculation To calculate the area of a polygon, apply the scale reduction to the line segments and then calculate the area as you normally would.Creating perfect circles

To create a circle with a fixed radius taking scale reduction into account will give you an irregular shape in the projection. You can use the approximate method (using center as scale factor) which will give you an ellipse since the scale factor for x is constant in this case. The formula for approximate circle becomes r2=(SR*x)2+y2 where SR is the scale reduction at the center of the circle.

For "accurate" circles SR becomes dependent on X. To find this solution you need to isolate e1 from the above equations to find the scale corrected distance. My math is too rusty to figure that one out yet, but if you know, feel free to post it in the comments.

You can download a C# class that performs all four types of distance calculations here.