I’ve been playing a lot of the Roslyn API lately, and recently got the idea to use it for analyzing the source-code of an API and report what the API looks like.

When I do API reivews, I often like to look at an Object Model Diagram, and I have often used Visual Studio for this by creating a new Object Model Diagram and dragging my class files onto it. It’s somewhat of a great experience, but it has several limitations and bugs that makes it tedious or misleading.

So I figured why not try and use Roslyn to generate these diagram? After all it’s basically just a list of members in a box. So I set out to create an HTML page with all the objects in a set of C# files.

The first trick is to just search for all C# files in a folder and add them to an AdHocWorkspace and compile the project:

var ws = new AdhocWorkspace();

var solutionInfo = SolutionInfo.Create(SolutionId.CreateNewId(), VersionStamp.Default);

ws.AddSolution(solutionInfo);

var projectInfo = ProjectInfo.Create(ProjectId.CreateNewId(), VersionStamp.Default, "CSharpProject", "CSharpProject", "C#");

ws.AddProject(projectInfo);

foreach(var file in new DirectoryInfo(path).GetFiles("*.cs"))

{

var sourceText = SourceText.From(File.OpenRead(file.FullName));

ws.AddDocument(projectInfo.Id, file.Name, sourceText);

}

var project = ws.CurrentSolution.Projects.Single();

var compilation = await project.GetCompilationAsync().ConfigureAwait(false);

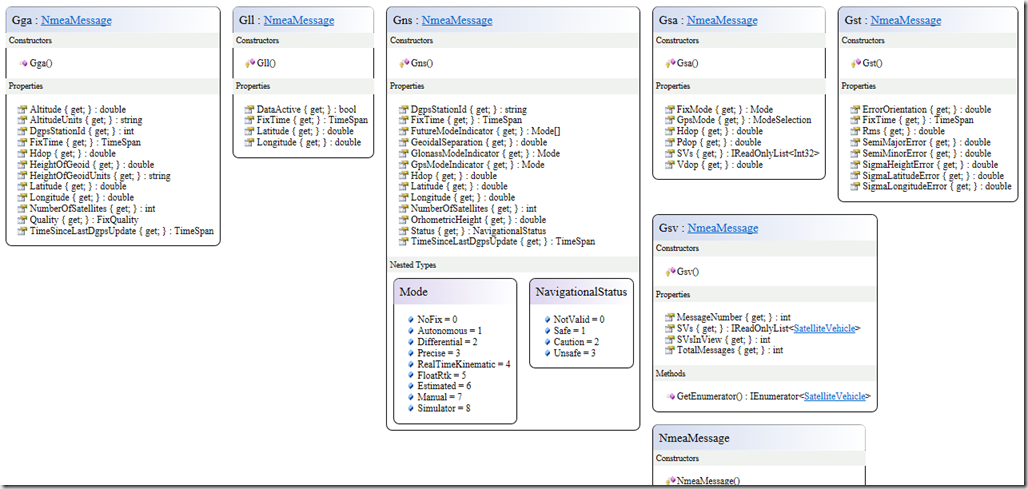

This quickly gives us a fully parsed set of C# files that we can now iterate over, and interrogate the members. By ignoring everything that’s internal or private, a bit of stream writing out to some basic HTML, I can generate an object model that looks a lot like what Visual Studio produces. Here’s one such example of my NmeaParser library:

You can see the full object model here: /omds/NmeaParser.html

Note that you can hover on classes, members and parameters and you’ll get the <summary/> API Reference description for them. Known types that are in the object model are also clickable for quickly navigating the view.

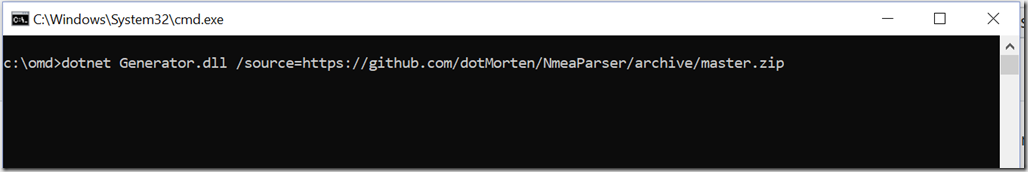

I created a .NET Core console app that builds this. All you do is set the source parameter to a folder of source-code, and the tool runs on spits out an “OMD.html” file. I found myself often wanting to generate an OMD of a Github repo, so as a shortcut I added an option to point straight to the zip-download on GitHub. The above object model diagram is done with this simple command:

I’ve already started using this tool in my day-to-day work, as well as some of the online community work, like the UWP Community Toolkit. What I’ve found is that inconsistencies and poor naming really stands out like daggers in your eyes when looking at an object model, compared to scanning over 100s or 1000s lines of code. Internal code is easy to fix later. But if you get the public object model wrong, you’re stuck between introducing breaking changes, or living with the poor API design forever.

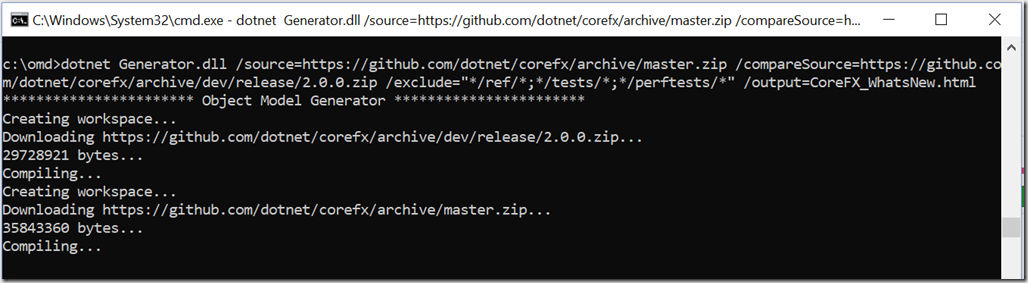

Want to go overboard? Try generating a giant OMD for the entire .NET Core repo (we’ll exclude all the test folders):

dotnet Generator.dll /source=https://github.com/dotnet/corefx/archive/master.zip /exclude="*/ref/*;*/tests/*;*/perftests/*" /output=CoreFX.html

You can also see the generated output here: /omds/CoreFX.html

Commandline? Where’s my NuGet?

What if you want to just create an object model when you build? Well there’s a NuGet for that. Add a NuGet reference to “dotMorten.OMDGenerator”, and each time you build your C# Class Library, an HTML file is also generated. It will slow your build down slightly. so you might not want it enabled all the time, but it’s an easy way to quickly generate an object model. Note though that this is limited to generating an OMD for a single project, and not a combined OMD for all .

Analyzing differences

Another thing I very often do is looking pull requests, and when you have 100s of objects, it’s not really useful to be looking at the entire object model. Instead you want to focus on what changed, and if it introduced any breaking changes. I basically needed a diffing-tool.

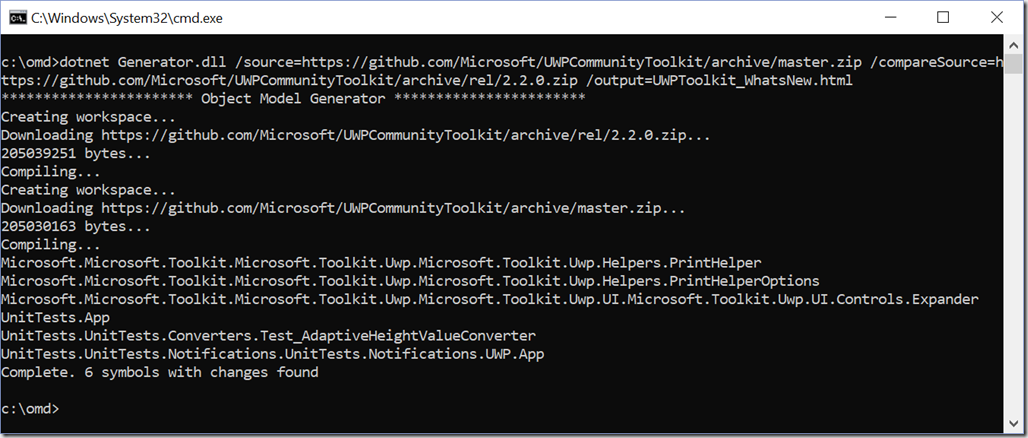

Again it was rather easy to use Roslyn to do this. I basically created a list of objects for two source code folders, and walked through member by member. By using Roslyn’s “ToDisplayString” method with a full-format string, it’s really just a matter of comparing the before/after generated string go detect a change, and only print out classes and members that changed. I then chose to render anything that was removed as red and strike-through to make it really clear what has changed. Again with PRs this makes it really easy. For instance here’s how to do a comparison between

If you want to try it yourself, here’s the commandline tool:

dotnet Generator.dll

/source=https://github.com/Microsoft/UWPCommunityToolkit/archive/master.zip

/compareSource=https://github.com/Microsoft/UWPCommunityToolkit/archive/rel/2.2.0.zip

/exclude="*/UnitTests/*"

/output=UWPToolkit_WhatsNew.html

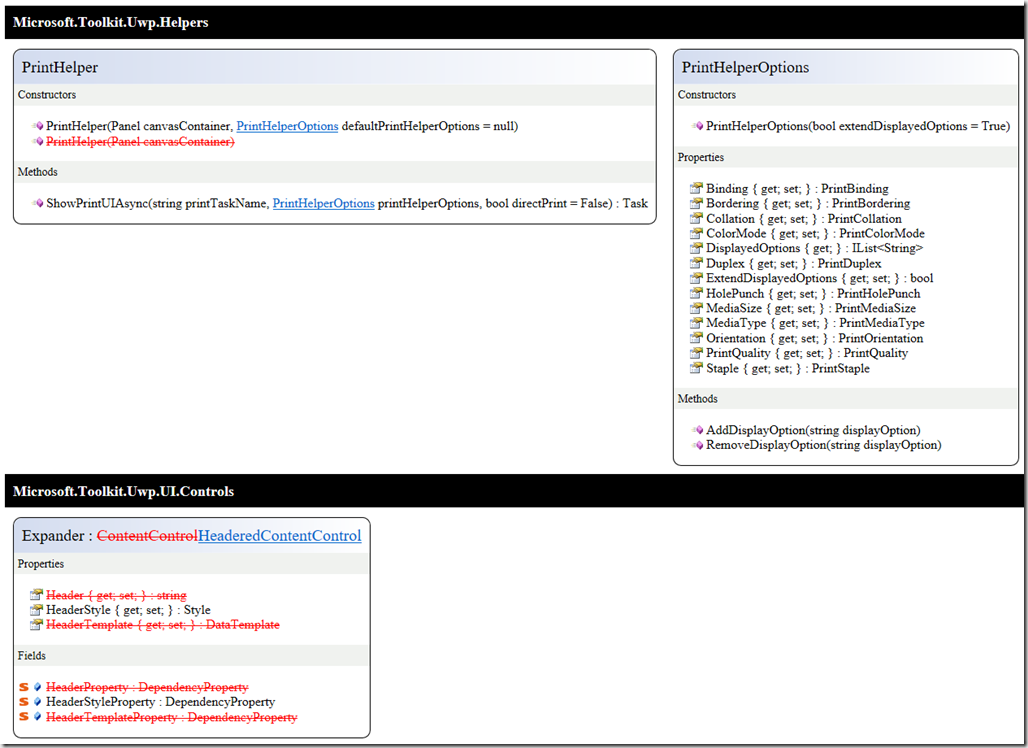

And here’s some of what that generates as of today:

You’ll notice that there are several changes highlighted in red. These are not necessarily breaking changes - some of them are*, and some could just be a slight change in signature (*The UWP Toolkit is currently working on v3.0 which includes cleaning up some old stuff and will have breaking changes)

We can also repeat this for .NET Core, and see what has changed since v2.0:

You can see the full change-list here: /omds/CoreFx_WhatsNew.html

Note that this will show breaking changes in .NET Core: But feat not: Just because it was in the v2.0.0 branch, there were things that weren’t actually released, and has been changed since. Another issue is the source structure has changed and some base types where not there before. This caused some of the types to look as if they are changed. The point is that the analysis is only as good as the source code it has access to. Another example of this you’ll see is if a class implements an interface, but doesn’t have a base class, and that interface isn’t part of the source code, there’s no way for Roslyn to know if it’s an interface or a base-class, and it’ll end up listing the interface as a base class.

Also there are probably still some bugs left. Not only will it show things that might not be a breaking change as a breaking change – it might also overlook changes, so use it as a tool to help you, but not as the end-all-be-all public API review tool.

Gimme the source-code already!

Yup! It’s right here: https://github.com/dotMorten/DotNetOMDGenerator

I take pull-requests too! If you see a bug, feel free to submit a PR (or at the very least submit an issue).

The future…

Things I’d like to add support for (and wouldn’t mind help with):

- Assembly-based analysis (ie post-compilation).

- Use solutions and project files as inputs

- Git-hook that automatically injects an object model of changes (if there’s changes), when a user submits a pull request.

- Support for accessing the zip-download from private repos

- Support for specifying two branches in a local repo, instead of having to have two separate source folders.